How We Render Animated Content From HTML5 Canvas

Overview

Animatron provides users with the ability to embed animations into web pages using HTML code, or to view the animation directly via a link generated by the Editor. However, this isn’t enough, since a user may want to view their animation offline or send it as an attachment to another user. For this reason, Animatron provides a way to publish animations to “offline” formats, like an animated GIF image or a video file. Users may set the publishing format in the Publish dialogue box, located in the Inspector Panel of the Editor.

This article will reveal some of the internal details of the process of publishing an animation from the Editor.

The Editor uses HTML5 canvas for drawing graphical elements, shapes, adding pictures, and so on. All items placed on the HTML5 canvas are saved in an internal document model (DOM), which, in turn, is translated into a text file. That text file, by its nature, is nothing more than a custom and tricky JSON document which holds not only graphical shapes, but also operations on the shapes and all the stuff which is required to perform an animation.

An Animatron animation, outside of the Editor, contains an open-source JavaScript module called the Animatron Player. This player component is responsible for taking the JSON document produced by the Editor and rendering it on the HTML5 canvas. That rendering process takes into account all items and transformations of the items – and in general is able to show a “frame” at any point of animation’s timeline. All of these frames put together make up an animated project. Of course, this is a very general overview, intended just to give you some idea of how the rendering process works.

The Challenge

Hopefully, it’s clear enough to conclude that movie rendering is performed strictly in the context of the browser – but we want to convert the animation to a video clip somehow. At the very beginning, the idea was to “adapt” the player somehow to be executed in the context of a Java application, so we could re-use Java2D canvas and perform API calls of that canvas from the player component. But as the team further investigated this option, it turned out that we’d have to re-invent almost the entire API of HTML5 canvas, which is way out of the scope of our project. And it really requires a lot of time and effort, which we couldn’t afford at that stage.

Then we turned our thoughts from that most basic implementation (somebody might call this JavaScript trick with Java2D “close to bare metal”) to a more abstract level: if we can’t reinvent HTML5 canvas, why can’t we get the browser to do the work for us? So initially we thought about using framebuffer capturing of the “real” browser in a “real” desktop environment (using X11 Windows System) – but the conclusion was the same as with Java2D – it will take a lot of time and effort to get the entire system up and running.

The Solution

We are living in a wonderful era! There are plenty of libraries and software components that are easily available and which can do almost anything. We were lucky enough to find this awesome “headless” browser, PhantomJS, which is the implementation of a browser and based on the WebKit core. It is called “headless” because it doesn’t require any graphical UI or support from operation system, so it can be started on almost any Linux server. The task was to get the browser to “load” a page which has the Animatron player embedded, pass JSON with the movie to it, and play the movie.

It worked! We were able to create very simple HTML pages (templates) for the browser, which were able to retrieve JSON documents from the external network, create instance of the player inside a PhantomJS instance and play the animation! So the task was to “extract” frames from the PhantomJS instance somehow – and again, it was already there. PhantomJS provides rich and comprehensive API for taking “screenshots” of a browser and saves the files on the disk. To reach our goal we only needed to “glue” the set of images from PhantomJS into a movie or a GIF image. And again, there’s a program that can do that trick for us – ffmpeg. Isn’t it awesome that when facing a problem you can just look around for solution and (in most cases) find it right away? Note: you may have to pare down the finished product to suit your purposes.

So in general, now that we have PhantomJS producing set of images, and ffmpeg – which can consume the images and produce a video file – things seems to be almost done, but wait! Imagine an animation that’s, say, 5 minutes long. 5 minutes is 300 seconds, and in order to produce a really smooth animation, it has to have a frame-per-second rate close to 24. So multiplying 300 x 24 = 7200. Over seven thousands of files, just for a five minute movie! And remember – we’re expecting to eventually have a huge community of people of avid Animatron users, so the chances are extremely high that many people will start to export video files from their project in the Editor all at once. We don’t want to fail this point!

Moreover, these 7200 files don’t need to be accessible at the same time, because ffmpeg will consume them incrementally, one by one – and it doesn’t need to jump ahead and back in that set of files.

As a solution, it was decided to re-use the unix pipes, which allow us to start “Program A” and redirect its output to the input of “Program B.” So instead of creating thousands of files, PhantomJS simply outputs the frames to stdout, and ffmpeg reads those files from stdin, producing a single movie file.

Initially the ffmpeg was used to create animated GIF files as well; however the quality of those files was awful, and it seems to be the result of a problem with ffmpeg, as reported during past few years, at least.

As an example, just compare the GIF image created by FFMPEG … (sorry, Tumblr won’t let us embed this GIF (!), please click here or on the image below to see what we’re talking about)

…With the image created by ImageMagick:

So the difference is clear enough – FFMPEG doesn’t work well with dithering. In turn, ImageMagick cannot work with streamed images. Dead end?

We tried to use gifsicle, which seemed to able to handle GIF files from standard input, however it expects to get input as GIF files. So we tried to get PhantomJS to save screenshots in GIF format – but faced another issue with the color palettes. Compare the images produced from the same movie in GIF format.

And PNG format:

The colors in the images are different, and result doesn’t look like like it does in the original movie, which you can see in the Editor or as an HTML5 movie.

We tried to play with some open source components like GifSequenceWriter, however it didn’t give good results – colors were still screwed. So we ended up purchasing amazing library – Gif4J, which solved the problem of colors and can read images from stream – the best from both worlds! The sizes of resulting images are: * FFMPEG – 18 megabytes * imagemagick – 7 megabytes * gif4j – 10 megabytes

And the result looks like this:

Adding Some Audio

Long ago, people enjoyed silent movies, but even then, the movies were more enjoyable when they were accompanied by some live piano music. Nowadays, no one will watch a movie without some sound effects – it simply doesn’t make any sense! So, Animatron supports adding audio tracks to an animation, and the player can play audio as well. But what about the creation of movie files? GIF images don’t support audio streams alone, so it’s not possible, unfortunately, to add an audio to the animated image, but what about movie files – can we have some audio there?

Both MP4 and WEBM video file formats do support audio playback, so it’s only matter of adding the audio streams somehow. If we have only one audio track attached to an animation, it’s only a matter of adding that stream to the video file, which is then rendered by the chain PhantomJS->ffmpeg. But what if we have more than one audio track (or clip)? What if these audio clips overlap, or have some gaps between them? The ffmpeg can not deal with such situations, so we had to find some workaround. And again, “everything was stolen before we came!” There is yet another awesome tool: SoX. This command-line application allows you to do a lot of different things – in particular, to glue together some audio files, pad these files with silence if needed, and mix parts (or entire files) into single audio stream. So the task is:

- extract all audio clips from the animation

- download appropriate audio files from external storage

- calculate start time (from the beginning of the animation) and the length of every clip in the animation

- prepare complex command-line for gluing audio together

- invoke sox and get the audio file, mixed from all the files from the animation

- add that audio file to the generated movie using the ffmpeg

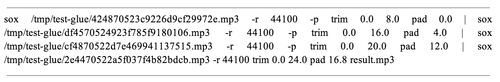

It’s that simple! If you’re wondering how complex SOX command line could be, here is a basic example:

Putting It All Together

So, with the help of various open-source tools – it is possible to: * run a JavaScript code inside the PhantomJS “browser” * capture images generated by PhantomJS, and pass them to the ffmpeg * create a movie from the images with the ffmpeg * build up a single audio file from set of smaller files, processing overlaps and gaps with sox * invoke sox and get the audio file, mixed from all the files from the animation * glue together the audio and video streams to produce final video

Of course, it does require some code to be written in order to manage the PhantomJS processes and pass output images from the PhantomJS to the ffmpeg. Extra work for housekeeping and dealing with external resources is needed as well. The entire codebase was written using Scala and Akka and is powered by fantastic REST framework Spray.

Eugene Dzhurinsky

Dark Lord of ServerSide Orcs